desktop

What is a desktop?

A desktop is a computer display area that represents the kinds of objects found on top of a physical desk, including documents, phone books, telephones, reference sources, writing and drawing tools, and project folders.

A desktop can be contained in a window that is part of the total display area or can be full screen, taking up the total display area. Users can have multiple desktops for different projects and work environments, and they can switch between them.

A desktop on a computer display is different from a desktop computer, which is personal computer (PC) that sits on a desk or table.

How does a desktop work?

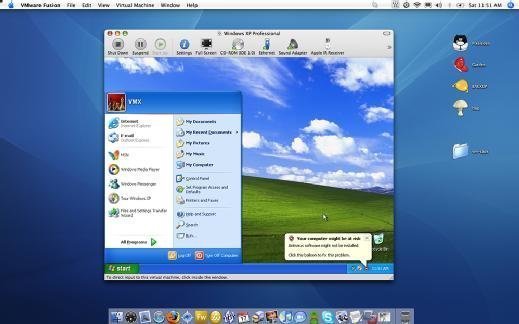

Operating systems (OSes), such as Microsoft Windows, Apple Macintosh and Android, in a PC or wireless device provide desktop functions in a graphical user interface (GUI) format to one or more display units. Depending on the desktop software, users can employ the standard OS desktop environment or customize it.

Desktop environments provide utility programs and applications, typically in the form of visual icons designed to identify specific apps. These are in the desktop viewing area, often in the desktop background or in a taskbar that displays frequently used functions. Most system desktops provide plenty of control over the system's capabilities for users.

Why are desktops important?

Whether a user is on a smartphone, tablet, laptop computer or desktop PC, they're interacting with a desktop interface to simplify access to system functions. On smartphones and tablets the desktop is equivalent to the home screen. Whatever type of computer or device, it's difficult to imagine life without today's powerful desktop environments.

In the days before GUI-based desktops, users had to enter keystrokes to launch a specific application. Knowledge of various control functions and their associated keystrokes was helpful in launching functions such as cut, copy, save and print. For example, pressing the <control> key and the letter "C" activates the cut function. Pressing <control> and "S" launches the save function.

Control functions are generally obsolete today. Users need only move the cursor to a specific icon to launch a function or application. Common desktop icons include the my computer, my documents and recycle bin icons. Desktops have greatly simplified the user-machine interface, improving productivity and convenience.

Microsoft is credited with the introduction and evolution of the GUI desktop. However, nearly all modern desktop OSes include a GUI desktop. This is true of Windows, Apple macOS and Linux.

The history of desktops

It is tempting to think of the desktop as synonymous with the Windows GUI, but the concept of a desktop predates the Windows OS and GUI. Some key dates in the history of computer desktops include the following:

1984. Tandy releases a text-based desktop called DeskMate. Users could work with DeskMate to open applications and documents and browse disk contents.

1985. Microsoft releases Windows 1.0 with a graphical desktop.

1997. Microsoft introduces Active Desktop, which accompanied Internet Explorer 4.0. Active Desktop displayed HyperText Markup Language content directly on the Windows desktop. It first came out in Windows 95, and was included in Windows 98 and Vista before it was discontinued.

2001. Windows XP was released with its Remote Desktop function that let users connect to a computer running Windows XP from across a network or the internet to access their applications, files, printers and devices.

2012. With Windows 8, Microsoft changes its traditional desktop layout, eliminating the Start menu and introducing the Metro interface. Microsoft designed Metro to compete with mobile operating systems such as Apple iOS. Although Windows 8 included a desktop layout, it forced users to toggle back and forth between the desktop and Metro interfaces, depending on which application they were using. The hallmark of the Metro interface was live tiles that could display application data such as weather information and stock market reports, as opposed to acting as static desktop icons.

2015. Microsoft releases Windows 10, bringing back the desktop Start menu and merging Metro and the legacy Windows desktop into a blended desktop interface.

2021. With Windows 11, Microsoft integrates Microsoft Teams into the taskbar, and redesigns the interface, making it more like the Apple Mac interface.

What is a virtual desktop?

A virtual desktop refers to a desktop OS, such as Windows 10, that runs on top of an enterprise hypervisor. Users employ a virtual desktop infrastructure (VDI) to access virtual desktops through thin clients. A remote desktop protocol transmits screen images and keyboard and mouse inputs between the user's device and the server on which the virtual desktop runs.

Consumer vs. enterprise desktops

From a functional standpoint, there's no difference between a consumer desktop and an enterprise desktop. Enterprise desktops often are more tightly controlled. They're commonly branded with wallpaper containing an organization's logo and include icons associated with applications approved by the organization's IT department.

Learn more about expectations for VDI's future use.