desktop virtualization

What is desktop virtualization?

Desktop virtualization is the concept of isolating a logical operating system (OS) instance from the client used to access it.

There are several different conceptual models of desktop virtualization, which can be broadly divided into two categories based on whether the technology executes the OS instance locally or remotely. It's important to note that not all forms of desktop virtualization technology involve the use of virtual machines (VMs).

How desktop virtualization works

Desktop virtualization employs hardware virtualization technology. Virtual desktops exist as VMs, running on a virtualization host. These VMs share the host server's processing power, memory and other resources.

Users typically run a remote desktop protocol (RDP) client to access the virtual desktop environment. This client attaches to a connection broker that links the user's session to a virtual desktop. Typically, virtual desktops are nonpersistent, meaning the connection broker assigns the user a random virtual desktop from a virtual desktop pool. When the user logs out, this virtual desktop resets to a pristine, unchanged state and returns to the pool. However, some vendors offer an option to create persistent virtual desktops, in which users receive their own writable virtual desktop.

Desktop virtualization deployment types

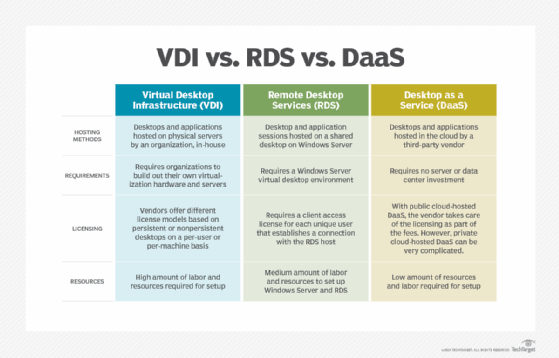

There are three main types of desktop virtualization: virtual desktop infrastructure (VDI), Remote Desktop Services (RDS) -- formerly, Terminal Services -- and desktop as a service (DaaS).

This article is part of

What is virtual desktop infrastructure? VDI explained

When most people think of desktop virtualization, VDI is probably the first thing that comes to mind. VDI is a technology in which physical servers host virtual desktops in an organization's own data center. Like server computing and virtualization, VDI relies on underlying hardware virtualization technology. Users sometimes use the terms desktop virtualization and VDI interchangeably, but they aren't the same.

While VDI is a type of desktop virtualization, not all types of desktop virtualization use VDI. VDI refers exclusively to the use of host-based VMs to deliver virtualized desktops, which emerged in 2006 as an alternative to Terminal Services and Citrix's client-server approach to desktop virtualization technology.

Other types of desktop virtualization -- including the shared hosted model, host-based physical machines and all methods of client virtualization -- aren't examples of VDI.

RDS is also an on-premises desktop virtualization technology. Unlike VDI, however, RDS doesn't rely on hardware virtualization, nor does it use desktop OSes. Instead, the server acts as a session host, running a Remote Desktop Session Host. One potential disadvantage of using this method is that application virtualization can be a problem. RDS runs desktop applications on Microsoft Windows Server, and an application that's designed to run on Windows 10 won't necessarily run on Windows Server. This is especially true for Microsoft Store apps.

DaaS is a public cloud-based desktop virtualization service that vendors offer. Organizations lease virtual desktops on an as-needed basis from a cloud provider. DaaS is generally accessible from anywhere using an RDP client.

Choosing a deployment model

The primary decision that organizations must make when they choose a deployment model is whether to deploy an on-premises VDI platform or subscribe to a cloud-based DaaS provider.

An on-premises platform is best suited to organizations that have already acquired, or have the budget to purchase, server hardware and any other required resources. An on-premises platform might also be a good choice for organizations that wish to repurpose their existing desktop OS licenses. Lastly, on-premises VDI is a good fit for organizations that lack the internet bandwidth needed to support a cloud computing DaaS offering.

A cloud-based option tends to be a good fit for organizations that don't have the IT expertise or budget to support an on-premises virtual desktop deployment. Cloud-based deployments are also well suited to organizations that employ seasonal or temporary workers because administrators can add or remove end-user capacity on an as-needed basis without incurring a significant investment in server hardware.

Types of desktop virtualization technologies

Host-based forms of desktop virtualization require end users to view and interact with their virtual desktops over a network using a remote display protocol. Because all processing takes place in a data center, user devices can be traditional PCs, thin clients, zero clients, smartphones or tablets.

Examples of host-based desktop virtualization technology include the following.

Host-based VM. Each user connects to an individual VM that a data center hosts. The user can connect to the same persistent desktop every time or access a fresh nonpersistent desktop with each login.

Shared host. Users connect to a shared desktop that runs on a server. RDS takes this client-server approach. Users can also connect to individual applications running on a server; this technology is an example of application virtualization.

Host-based physical machine. The OS runs directly on another device's physical hardware. Client virtualization requires processing to occur on local hardware.

OS image streaming. The OS runs on local hardware, but it boots to a remote disk image over the network. This is useful for groups of desktops that use the same disk image. OS image streaming, also known as remote desktop virtualization, requires a constant network connection to function.

Client-based VM. This is a VM that runs on a fully functional PC with a hypervisor in place. Administrators can manage client-based VMs regularly by syncing the disk image with a server, but a constant network connection isn't necessary for them to function.

Desktop virtualization vs. server virtualization

Server virtualization is the abstraction of a server OS and the apps from the physical machine, which creates a virtual server, running on a physical server above the actual server operating system. In such a configuration, many VMs can be running on a single physical server, each having its own OS and apps.

In desktop virtualization, the OS and apps are abstracted from a physical thin client, connecting to data over the internet. In this model, the desktop is completely hardware-independent, an obvious upside. On the other hand, the bandwidth requirement for such a deployment is significant and can create difficulties during peak usage.

Desktop virtualization vs. app virtualization

Application virtualization differs from desktop virtualization in that isolates the app itself, rather than the app and the OS executing it. As with desktop virtualization, however, it's hardware-independent, allowing the app to run on almost any client. It's also independent of the OS running on the endpoint.

Installation and maintenance of apps are thus greatly simplified, as only one installation is required and only one instance will require updates. Deployment is a bit more cumbersome than with a virtual desktop, as a preconfigured executable must be run on user devices, but this is a minor concern. On the other hand, apps can be retired from many end-users' devices at once by simply deleting them on the app server.

Benefits of desktop virtualization (and some drawbacks)

Like any other technology, there are both advantages and disadvantages to using desktop virtualization. One of the primary advantages of desktop virtualization is that virtualization often makes it easier for IT professionals to manage the desktop environment. Rather than maintaining countless physical desktops, administrators can focus their attention on a small number of desktop images that they deploy to the users.

Conversely, there are some circumstances in which the use of desktop virtualization can increase an organization's management burden and its licensing costs. For example, if an organization allows users to connect to virtual desktops from their physical desktops, then the IT staff must license and maintain both the physical and virtual desktops.

In this example, each user is consuming two desktop OS licenses -- one for the physical desktop and one for the virtual desktop -- and two IP addresses. If an organization provides its users with virtual desktops, it's usually best to provide connectivity to those virtual desktops through thin clients, zero clients or bring your own device hardware.

Another advantage to desktop virtualization is that users can access their virtual desktops from anywhere. Even if a user is working from home or a hotel room, they can still work from the same desktop environment that they use in the office.

A potential disadvantage, however, is that virtual desktops can't function without connectivity to the VDI environment. As such, an internet connectivity failure or a server hardware failure could make an organization's virtual desktops inaccessible to users.

Virtual desktop providers

As the presence of VDI grew, VMware -- which coined the term VDI -- joined Citrix and Microsoft as leaders in the desktop virtualization market. Other examples of vendors that offer various desktop virtualization technologies include Amazon Web Services, Hewlett Packard Enterprise, Oracle, Parallels International, Red Hat and Workspot.

There are also many third-party vendors with products and services that are designed to improve the management, security and usability of virtual desktops. These offerings range from monitoring and application management tools to user environment management and bandwidth optimization software.

Learn how organizations determine the best way to host and deliver virtual desktops and the advantages of considering different migration strategies.